Artificial Intelligence is at the center of an enterprise revolution, where open-source tools are driving new initiatives and capabilities. From cutting costs to boosting efficiency, open source is fueling this expansion. The numbers paint this picture accurately; more than 58% of companies today use open-source tools in at least half of their AI and machine learning projects, with 34% relying on them in three-quarters or more of their initiatives [1]. Open-source AI models have fundamentally transformed the way companies develop and use AI. They are a solution for the rigidity, costs, and inadaptability of the proprietary system.

As their importance grows along with the plethora of applications, so does the concern over their opacity. It is becoming increasingly complex to differentiate between truly open models and those that are “openwashed” (the practice of falsely representing models as open while restricting real access, usage, or transparency), leaving the organizations open to ethical and operational risks. [2]

Within the next decade, the ability to assess and ensure true transparency will be a competitive advantage and part of a moral imperative. As we step into an era of new regulations, the demand for higher accountability and openness will become the key differentiator. Organizations that begin to make that transition today will be very well positioned to excel both technologically and reputationally in an AI-driven world. Let’s explore the importance of model transparency, how it can be achieved, and what enterprises can do proactively to set the stage for a promising, transparent future of AI.

The Risks of Openwashing in AI Models

When it comes to AI models, superficial claims of openness expose enterprises to potential liabilities that impact compliance, security, and trust.

Key Risks:

- Regulatory pitfalls: Ambiguous or restrictive licensing could mean expensive regulatory risk.

- Security exposure: Undocumented or hidden components may contain “backdoors” or vulnerabilities.

- Bias amplification: Partial disclosure of data sets can entrench systemic bias within enterprise AI systems.

- Operational uncertainty: Non-reproducibility affects performance validation and scalability.

Reputation damage: “Openwashed” models put the enterprise at a risk of losing the trust of customers and stakeholders.

Model Openness Framework — What is It and Why is It Important?

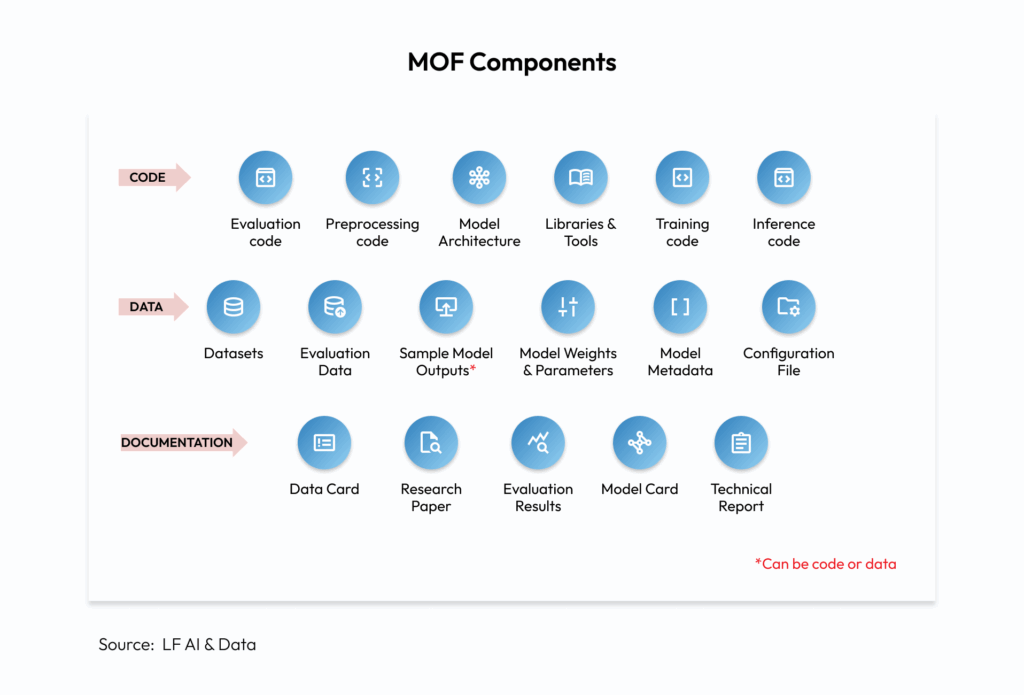

With so many open-source models available in the market, how can enterprises trust that the model is truly “open”? This is where the Model Openness Framework (MOF) comes into play. MOF is a practical set of standards and checklists used to evaluate the transparency and completeness of an AI model. By going beyond open code, it assesses whether a model’s architecture, training data, weights, documentation, and licensing are all publicly available and under what licenses.

Figure 1: The Model Openness Framework identifies 17 components that form a complete model release.

These components build trust by ensuring complete transparency and by revealing how a model was built, trained, and tested. This helps enterprises assess risks, verify claims, and understand the limitations of a model. By outlining safety protocols and usage limitations, MOF also encourages responsible implementation, which then ensures that AI transparency is both significant and accountable.

Utilizing these foundational elements, the MOF establishes three categories of model openness.

Figure 2: The MOF defines openness across three primary tiers.

This granularity allows organizations to evaluate model suitability objectively, avoiding surprises such as hidden code, insufficient documentation, or vague licenses.

Why Transparency is Important in Enterprise AI

Transparency is not just a buzzword in enterprise AI; it is a necessary precondition to trust, accountability, and regulatory compliance.

The Model Openness Framework (MOF) helps enterprises:

- Cutting through openwashing with MOF: It identifies which models for openness are ready for responsible adoption, thereby reducing the likelihood of “openwashed” deployments.

- Compliance and trust: The more precise the standards are, the easier it will be to trust and audit the AI results and reproduce them, which is essential for industries operating in a regulated environment.

- Make informed decisions: Leaders can easily review the various models available based on openness and make an informed and appropriate choice for their business needs and risk profile.

Transparent AI systems are simpler to audit, govern, and scale safely across enterprises. Without these factors, models remain unverifiable, putting enterprises at risk of poor decision-making, regulatory penalties, and damage to their reputation.

Inside the Forrester’s Open-Source AI Model Openness Framework

Forrester’s Model Openness Framework (MOF) is an approach to evaluate how the transparency and governability of AI models throughout their entire lifecycle. It assesses the extent to which stakeholders can observe, control, and modify models to ensure compliance, trust, and accountability.

As its core components, it includes:

- Data transparency – clarity of inputs, sources, and quality.

- Model explainability – the ability to interpret decisions.

- Operational visibility – monitoring and auditability of models in use.

- Governance controls – policies, roles, and compliance mechanisms.

These components together help enterprises deploy AI ethically and responsibly while balancing innovations.

Recognizing the fragmented open-source AI models (OS-AIM) landscape, Forrester Research developed the Open-Source AI Model Openness Framework Open-source AI models (OS-AIMs). It promises to offer flexibility for enterprises; however, their level of openness varies widely. These models also vary in terms of transparency, rights of use, and community engagement, all of which can have an impact on the level of trust, as well as on deployment and long-term value. This framework helps technology executives and AI governance experts determine the complexity and intricacy of machine learning models, thereby bridging the gaps between technology adoption, business requirements, and risk appetite. [3]

Reproducibility in AI: Key Challenges and Solutions

The principles of science are uncomplicated yet profound. When you employ the same methodology, utilizing identical components, you can expect to achieve consistent and reliable results every time. This is what is called “Reproducibility” in the AI world, achieving the same or similar results using the same dataset and AI algorithm within the same environment. It ensures that models yield reliable, repeatable performance, imperative for safety, trust, and robust deployment. [4]

What does it mean for enterprises? If you can’t reproduce results, you can’t fully trust your AI system’s reliability. The rapid development and advancement of AI require reproducibility to keep pace with the innovation and complexity of AI models.

An analysis of NeurIPS (Conference on Neural Information Processing Systems) revealed that almost 70% of AI researchers acknowledged having difficulty reproducing the results of others, even within the same subfield. Another study suggests that less than a third of AI research is reproducible or verifiable. [5]

Here are some of the key challenges that arise with reproducibility in AI:

- Lack of record-keeping: Absence of proper logs for data sets, code versions and hyperparameters affects repeated results. In fact, only about 5% of researchers share code, and fewer than 30% share test data, contributing to a well‑documented reproducibility crisis. [6]

- Randomness in model training: Variations in software versions, hardware non-determinism, and random seeds create subtle shifts in outcomes that are hard to track.

- Complexity and interdependencies: Current AI models are based on complex architectures and various dependencies, making it difficult to replicate the environment and evaluate it accurately.

For enterprises looking to enhance AI reproducibility, adopting a few best practices can really help streamline the process:

- Thorough documentation: Maintain a complete record of model and data cards, code, and details of the environment, which allows third-party verification.

- Standardized evaluation benchmark: Use datasets and metrics that are commonly accepted in the industry for fair comparison.

- Centrally store and access data: Establish governed, centralized data repositories to prevent data silos and ensure that teams have access to common, approved versions of the data.

- Encourage open sharing: Foster the open sharing of code, models, and data within the team or through open-source channels, as long as it complies with security policies, to facilitate peer review and validation.

- Automate pipelines: Use automated pipelines for training, evaluation, and deployment of the model to eliminate manual errors and ensure consistency from development to production.

Understanding and Mitigating Open-Source AI Risks

Open-source AI offers significant flexibility and innovation, but it also comes with its share of risks. Therefore, businesses must formulate effective strategies to address these challenges. While the risks associated with open-source AI are relatively minimal, understanding and managing the inherent ones can make them manageable with the right approach.

Potential risks

- Security Threats: A public model may contain malicious or nefariously hidden code that establishes backdoors or allows unauthorized activities.

- Ethical Threats: Data sets that remain undisclosed or are misrepresented can embed social bias and pose ethical pitfalls.

- Data Privacy Breaches: Using fine-tuning open models with sensitive internal data can lead to unintended data leaks if protections aren’t in place.

- Compliance and Licensing Issues: Ambiguous or restrictive open-source licenses may limit the commercial use of models, potentially leading to legal complications.

Enterprises must incorporate risk evaluation tools and governance frameworks that have a broader focus that extends beyond mere functionality.

Mitigation strategies

Conduct Rigorous Security Audits: Vet all open-source models and dependencies for vulnerabilities before use.

Implement Strict Data Handling Protocols: Ensure data anonymization and controlled access when using open models internally.

Monitor and Mitigate Bias Regularly: Audit outputs using fairness tools and retrain models if necessary to minimize bias.

Review Open-Source Licenses Thoroughly: Involve legal teams to ensure compliance with licensing terms, especially for commercial use.

“The role of open-source software in enterprise AI cannot be understated. While open-source AI democratises access to tools and enriches enterprise capabilities, its effective adoption depends heavily on mitigating challenges such as governance and operational complexity.”

– Charlie Dai, VP and principal analyst at Forrester

Want to understand Agentic AI security requirements?

Learn MoreTips and Strategies for Adopting Open-Source AI in Enterprises

Enterprise adoption of open-source AI is no longer the exception; it’s the expected route to scalable, innovative, and cost-effective digital transformation. However, moving from tactical use to strategic success requires deliberate planning and robust frameworks:

- Align with Openness Frameworks: Utilize MOF to categorize, compare, and select models that meet your governance and innovation requirements.

- Invest in Skills: Upskill teams to manage, audit, and adapt open-source models, thereby building organizational confidence in tooling and operations.

- Hybrid Architectures: Blend open-source and proprietary solutions for optimal performance, control, and security.

- Focus on Documentation and Process: Mandate the use of open licenses, complete documentation, and transparent release protocols for every deployment.

- Community Engagement: Participate in open-source AI communities to gain early access to innovations, share best practices, and contribute to improving model robustness.

The Role of Agentic AI: The New Frontier of Openness

The evolution of agentic AI systems represents a significant shift for enterprises, as these agents are capable not only of performing tasks but also of making decisions independently. As these systems grow more autonomous, the importance of openness and transparency becomes even greater. Organizations need to have a clear understanding of how agentic AI operates, particularly in terms of data access and decision-making processes. This understanding is essential for effective governance, building trust, and ensuring compliance.

An open agentic AI framework can help organizations understand and audit the flow of tasks, mitigate the risks of unexpected behavior, and reduce the risk of misuse. They also enable secure interaction between human workers and AI systems, allowing organizations to leverage the efficiency of autonomous systems while maintaining oversight and control.

Agentic AI amplifies the benefits of openness, i.e., autonomy, transparency, and trust, transforming AI from a mere tool into a collaborative partner in enterprise transformation.

The Future of AI is Built on Openness and Transparency

Open-source AI models are influencing enterprise technology in exciting ways. However, it’s the structured openness that’s defined, measured, and managed through frameworks such as MOF that turns potential into real business value in today’s day and age.

Another point to note is that the future of enterprise AI hinges on transparency and openness that allow reproducibility at scale. When organizations prioritize clear data practices, explainable models, and accountable systems, they take a leap towards building trust and compliance while unlocking AI’s true potential securely and responsibly.

At OneReach.ai we are embracing this future by building orchestrated Agentic AI systems that are grounded in transparency, reproducibility, and responsible automation.

Get a free technical demo of GSX and see how to securely govern AI agents across your organization

Free DemoFAQs

1. What is a Model Openness Framework (MOF)?

A Model Openness Framework is a standardized system for evaluating how transparent and complete an open-source AI model is across its data, code, documentation, and licensing.

2. Why is using an MOF important for enterprises?

MOFs help organizations identify trustworthy, reproducible AI models, reduce risk, and ensure regulatory compliance when adopting open-source AI.

3. How does the Model Openness Framework classify AI models?

Forrester’s MOF uses a three-tier system — Class I (maximum transparency), Class II (partial openness with some restrictions), and Class III (basic openness) — based on the number of components made public.

4. What are the main benefits of selecting a fully open (Class I) model?

Class I models provide maximum transparency, making them easier to audit, reproduce, and deploy in high-trust or regulated environments.

5. Can using a Model Openness Framework speed up AI innovation?

Yes, MOFs enable faster, informed decision-making, so technology teams can confidently adopt and deploy open-source AI solutions that meet business and compliance needs.