Enterprises are deploying AI agents faster than they are governing them. AI guardrails are one of the primary mechanisms that close that gap. Without them, every agent your organization runs is making decisions you did not explicitly authorize.

The risks are not theoretical. According to Gartner®, “by 2028, 25% of enterprises will have experienced major business disruptions due to inadequate AI governance and guardrail practices.” McKinsey’s 2026 AI Trust Maturity Survey found that security and risk concerns are the top barrier to scaling agentic AI and that only around 30% of organizations have reached meaningful maturity in agentic AI governance.

The pattern is consistent across industries: capability is scaling faster than control. Agentic systems handle sensitive data, take real-world actions, and interact with customers at scale. When they operate outside defined boundaries, whether due to prompt manipulation, poor data quality, or inadequate access controls, the consequences land on the organization, not the model provider.

What Are AI Guardrails in Agentic Systems?

Gartner defines AI guardrails as “practical controls that keep conversational AI applications aligned with enterprise governance, business and legal priorities, as well as with security and risk requirements.” In agentic systems, where AI agents retrieve data, take actions, and execute multi-step workflows autonomously, guardrails govern what an agent can say, what data it can access, what actions it can take, and how its outputs are validated before they reach users or downstream systems.

Guardrails are not restrictions on capability. Rather, they enable AI agents to operate safely.

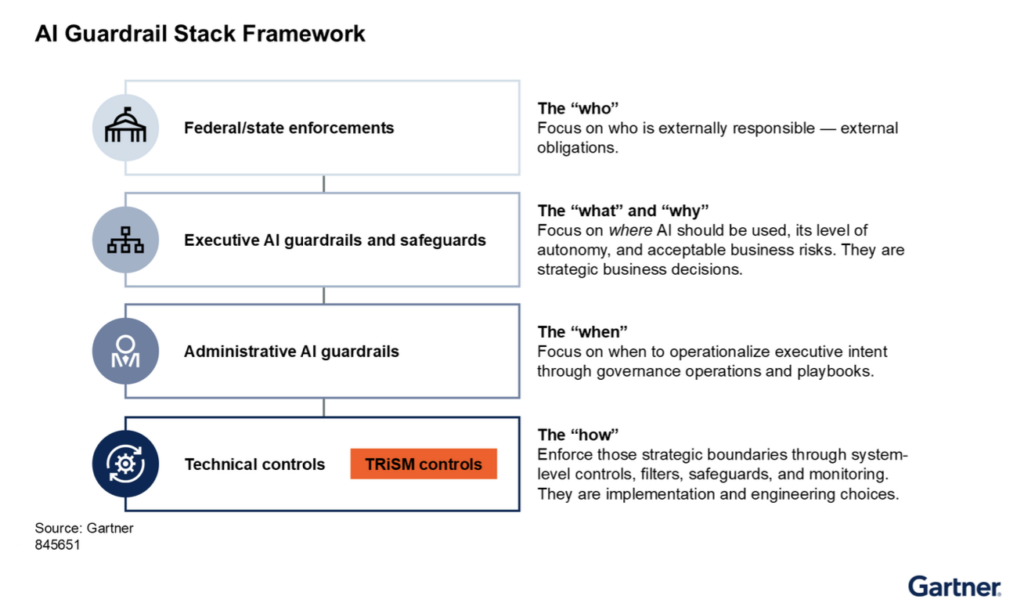

Figure 1: AI Guardrail Stack Framework

Source: Gartner, “Deploy AI Guardrail Stack: The Minimum Standard for Responsible Enterprise AI Protection,” Carlton Sapp and Avivah Litan, 20 January 2026, ID G00845651. Reprinted with permission.

Standard application controls, such as authentication, input validation, and role-based access, were designed for deterministic software, but many carry forward into agentic systems and remain effective. The strongest controls are architectural and follow the principle of least privilege: an agent should have access only to the specific data and capabilities required for its immediate task, and nothing more. An agent that is never granted access to PII data does not need a guardrail to prevent it from sharing that data. The boundary does the work.

The failure modes emerge where architectural controls end and runtime reasoning begins. An agent operating within a poorly scoped authorization model does not stop when it reaches an implicit boundary. It continues, in whatever direction its inputs and objectives allow. That is where purpose-built governance becomes non-negotiable. Operating boundaries, policy enforcement at the architecture level, and full audit lineage are not replacements for standard controls, but the layer that governs what those controls cannot anticipate.

Why the Risk Surface in Agentic Systems Is Different

Traditional software fails in predictable ways. An agentic system fails in ways that often go unnoticed for longer periods of time.

In government, an AI agent processing benefits applications or responding to citizen inquiries can produce incorrect eligibility determinations at scale, with no human reviewer in the loop and no output validation catching the error before it affects thousands of cases. Unlike previous forms of automation like RPA, where a failure would lead to downtime, agentic AI fails far less frequently, if at all, compounding errors across every case it touches.

In logistics, an agent coordinating shipment routing and supplier communications can act on manipulated or outdated data, committing the organization to contracts, routes, or inventory decisions it never approved, cascading across a supply chain before the error surfaces.

In retail, a customer-facing agent retrieving from a live product or policy knowledge base can provide incorrect, confident information about pricing, availability, or returns, creating liability and eroding trust at the exact moment a customer is making a purchase decision.

The failure modes are not isolated errors. They are systemic. Each capability that makes agents valuable, including autonomous action, live data retrieval, multi-step workflow execution, and always-on availability, also expands the surface area where an ungoverned agent causes damage. And that damage scales with deployment. One agent operating outside its defined boundaries is a governance problem. Ten thousand agents operating that way is an enterprise liability.

Standard application controls were not designed for systems that reason probabilistically and act autonomously. Authentication, input validation, and role-based access remain necessary and effective within agentic architectures. But they were built for deterministic software. Purpose-built guardrails govern what standard controls cannot anticipate, including runtime reasoning, novel outputs, and autonomous action across systems. They are the additional layer, and not a replacement.

What Is Enterprise AI Governance?

Read More7 Key Guardrail Categories

Gartner identifies seven categories of guardrails that enterprise leaders need to address in agentic AI systems. Each maps to a distinct risk class.

- Prompt injection mitigation addresses the highest-severity threat to LLM-based systems. Attackers embed instructions inside inputs (e.g., emails, documents, web content) that cause the agent to override its system instructions, extract sensitive data, or take unintended actions. A documented vulnerability in Microsoft 365 Copilot (“EchoLeak”) demonstrated that hidden instructions inside an ordinary email could cause Copilot to automatically exfiltrate sensitive data from a user’s Microsoft 365 environment (chat histories, OneDrive files, SharePoint documents) without the user initiating any action. Mitigation requires layered controls at both input and output, not a single filter.

- Content moderation covers harmful, prohibited, or policy-violating content across text and multimodal inputs. The failure mode here is less about external attack and more about what an unmoderated agent will produce when users push its boundaries or when its training data reflects biases it has no mechanism to correct at runtime.

- PII and PHI protection governs personally identifiable information and protected health information. In June 2025, security researchers discovered that McDonald’s AI hiring tool had exposed records from up to 64 million job applicants through an administrator account still using the default password “123456.” The tool itself was not compromised. The access controls around it were not appropriate to the data it held.

- Identity and access management (IAM) controls define what an agent can see and do on behalf of a user or system. When agents are granted administrator-level access rather than the minimum permissions their function requires, the blast radius of any failure expands significantly.

- Ethics and bias issues address outputs that discriminate, reinforce stereotypes, or produce systematically different results for different groups of users. Bias enters through training data, through model design, and through the interpretation of outputs. Without detection controls, that kind of output operates at scale before anyone notices the pattern.

- Hallucination mitigation and RAG content validation address the same underlying risk from two directions. Hallucination mitigation focuses on what the agent generates. RAG content validation focuses on what the agent retrieves and trusts. An agent that produces plausible but incorrect output does not announce the error. It delivers a wrong answer with the same confidence as a correct one. In practice, the more dangerous failure in enterprise deployments is not that the agent invents information. It is that the agent trusts what it retrieves. Outdated policy documents, conflicting data sources, and low-relevance matches can all produce authoritative-sounding responses that are simply wrong. Source citation requirements, retrieval validation, and human review for high-stakes outputs are what keep that risk manageable.

Guardrails Are Not a Single Layer

One of the more consequential governance mistakes is treating guardrails as a single technical control (a filter, a classifier, a policy prompt) rather than a structured system.

Gartner mentions an AI guardrail stack as “a business-specific and engineered safeguarding control framework for organizations. It includes the alignment of regulatory requirements, executive guardrails implementing the organization’s AI and information protection strategy, administrative standards for operations, and technical controls to ensure compliance.”

The logic is straightforward. If one element fails, others are in place. A technical filter that misses a prompt injection attempt does not cause a breach if the IAM controls are configured correctly. An agent that generates a hallucinated answer does not cause a compliance failure if the human-in-the-loop review process catches it before it is acted on.

Gartner projects that “by 2027, 80% of large enterprises will have clearly defined, reusable guardrail practices for their agentic AI systems.” The 20% that do not will be operating with a degree of exposure that is difficult to defend to boards and to regulators.

Guardrails cannot be delegated to the model alone. The controls that matter are distributed across orchestration vendors, cloud providers, model configuration, and prompt-level instructions. No single layer is sufficient, and no single vendor owns the stack. The organizations that understand this are building reusable, auditable guardrail frameworks now, and that investment pays forward. Governed agentic systems move faster, behave more predictably, and earn the institutional trust that allows deployment to scale. The guardrail is not the constraint on agentic AI. It is what makes ambitious agentic AI defensible.

Design, Govern, and Orchestrate AI

Connect with UsGartner, Deploy AI Guardrail Stack: The Minimum Standard for Responsible Enterprise AI Protection, 20 January 2026

GARTNER is a trademark of Gartner, Inc. and its affiliates.

FAQs

1. What are AI guardrails in agentic systems?

AI guardrails are structured controls that define what autonomous AI agents can access, generate, and execute, ensuring their actions stay aligned with enterprise governance, security, and risk requirements.

2. Why do agentic systems need guardrails more than traditional software?

Unlike deterministic software, agentic systems reason probabilistically and act autonomously. This creates unpredictable, compounding failure modes that require dedicated guardrails beyond standard access and input controls.

3. What risks do AI guardrails help prevent?

They help mitigate risks such as prompt injection attacks, data leakage (PII/PHI exposure), hallucinated outputs, biased decisions, and unsafe or unverified actions in automated workflows.